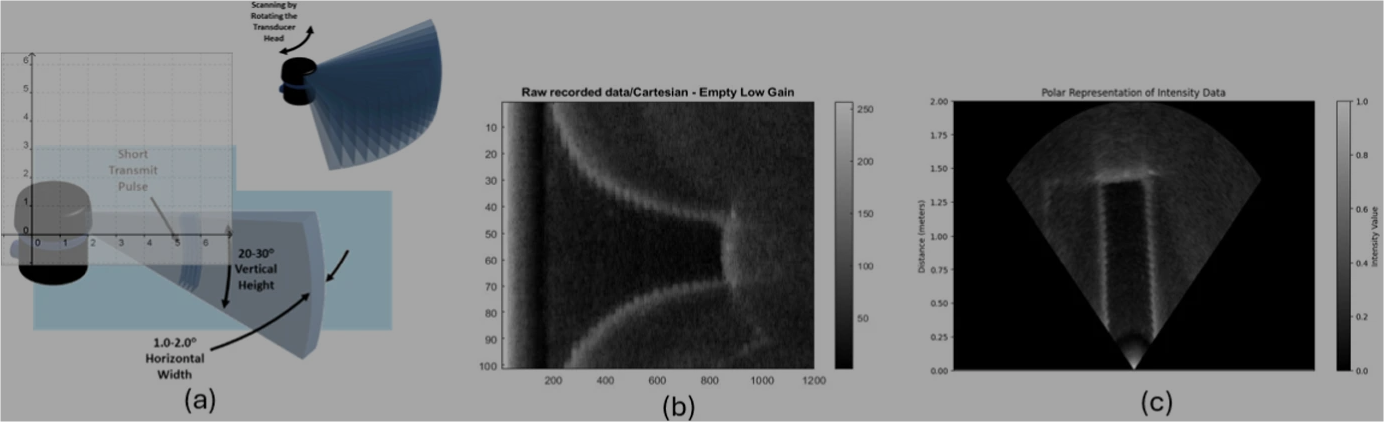

Researchers explored whether the Ping360 Scanning Sonar could be used for more than navigation, specifically whether it could detect and identify underwater objects. Through controlled pool experiments and deep-learning segmentation models, the study showed that with the right preprocessing, the Ping360 is capable of achieving clear, near-real-time object detection results.

Abstract: This study investigates the feasibility of repurposing the Blue Robotics Ping360—a low-cost, mechanically scanned single-beam sonar—for underwater object detection beyond its navigation role. It addresses three key questions: (i) what challenges arise when interpreting navigation sonar data in cluttered or reflective environments; (ii) how effective is manual annotation when combined with segmentation models such as U-Net; and (iii) whether affordable sonar can support complex object-level perception. Controlled pool experiments were conducted to examine acoustic artifacts including reflections, shadows, and range-dependent distortions. A manually annotated dataset was used to evaluate classical and deep-learning-based segmentation methods. Results show that preprocessing—near-field exclusion, denoising, and polar resampling—significantly improves detection clarity. Leveraging GPU-accelerated and data-parallel processing, the framework achieves scalable, near-real-time performance aligned with high-performance computing principles. The dataset and code are publicly available to encourage further research in dynamic and multi-sensor underwater perception.

Authors: Hasan, M. J.; Kannan, S; Rohan, A.; Talaat, A. S.

Journal: The Journal of Supercomputing

To read more, click here!